The Large Model Systems Organization develops large models and systems that are open, accessible, and scalable.

Latest Blog

See all posts

Announcing the Recipient of the 2026 LMSYS PhD Fellowship

We are delighted to announce the first recipient of the LMSYS Fellowship Program: Will Lin. Following the launch of our Fellowship Program and careful review of applications, we selected Will for his...

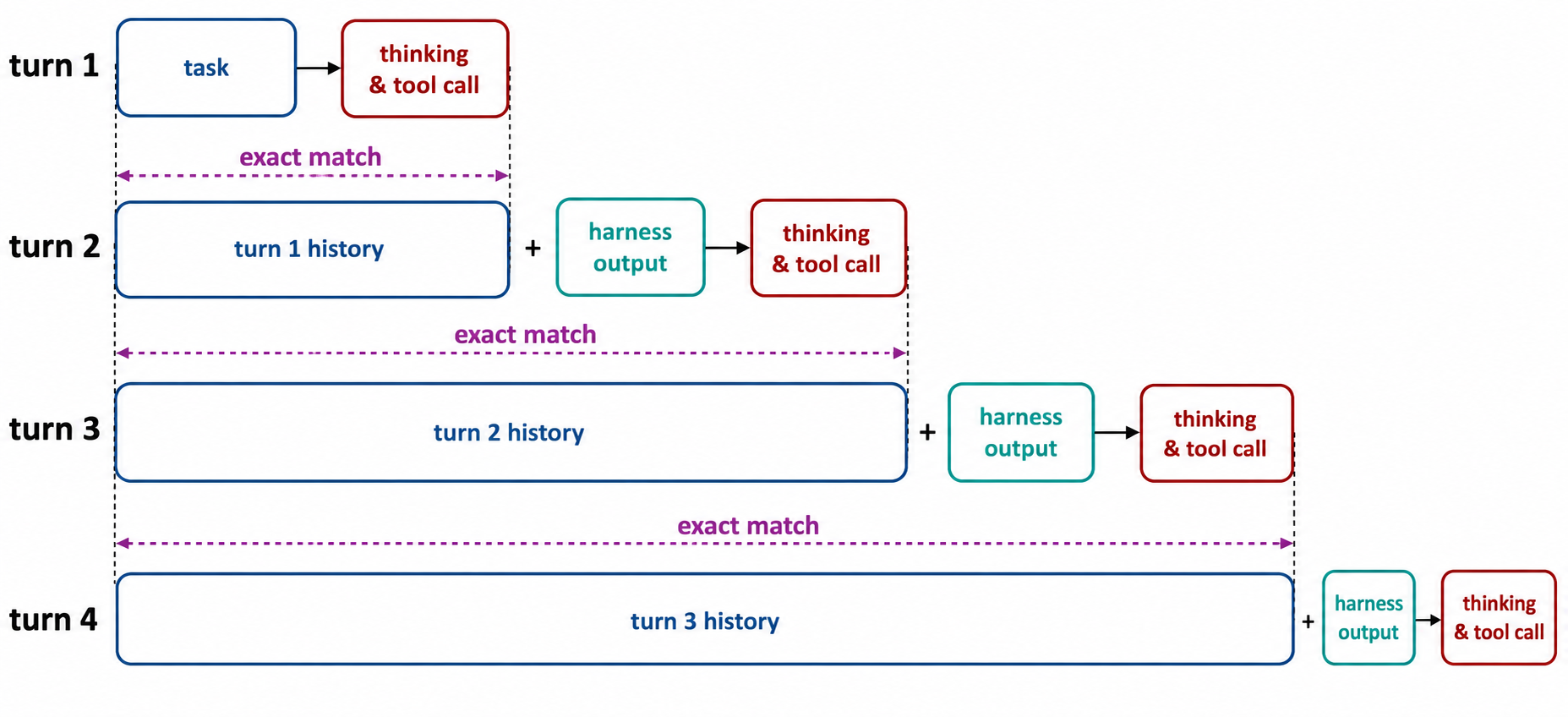

No Token Left Behind: Demystifying Token-In-Token-Out in Miles

In agentic RL, a rollout is not a single generation. It is a chain of model calls, tool outputs, harness messages, and resumed generations. Token-In-Token-Out (TITO) is a design principle that address...

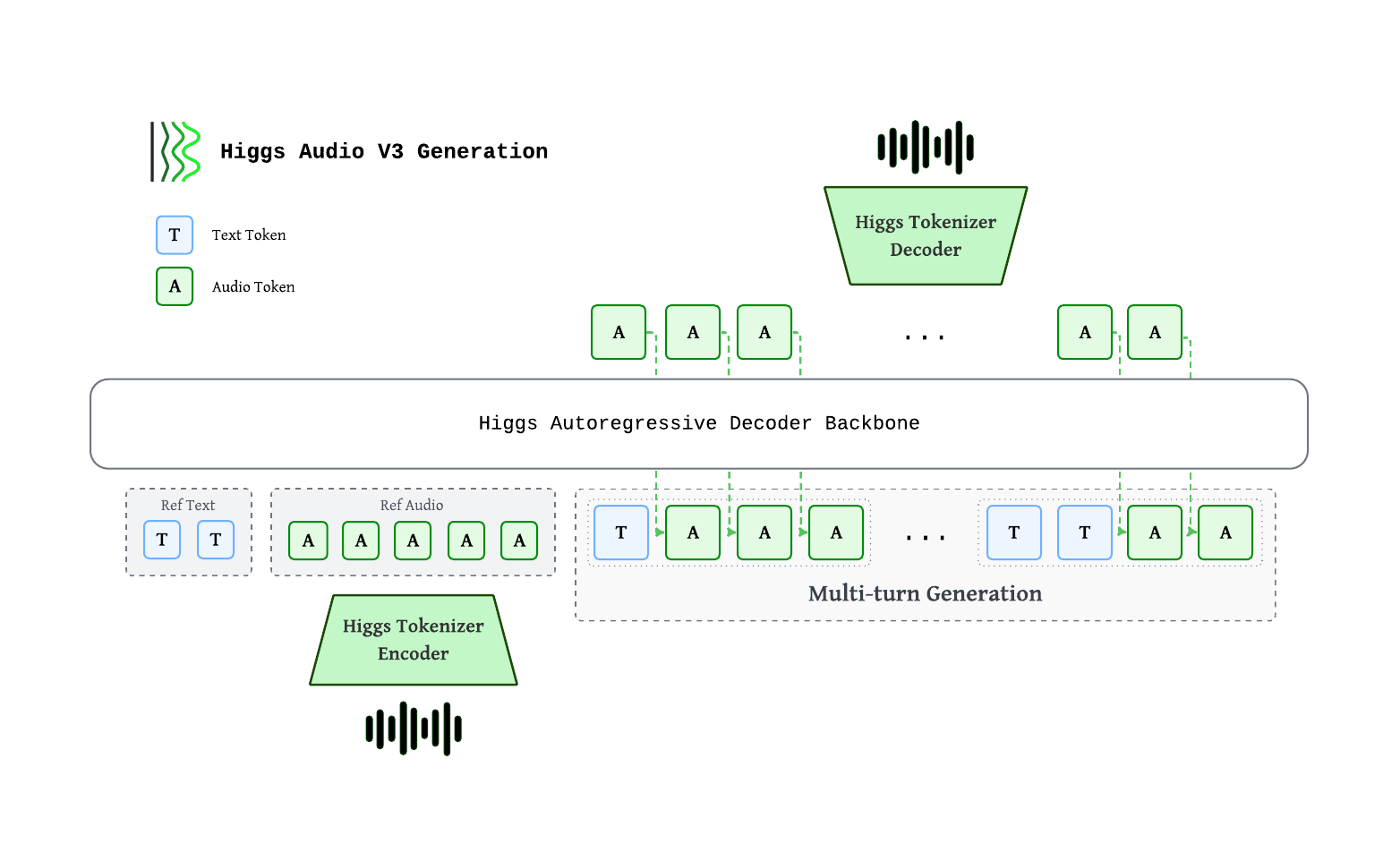

Higgs Audio v3 TTS on SGLang-Omni: Real-Time, Controllable Speech for Voice Agents

Today we are announcing end-to-end serving for Higgs Audio v3 TTS on SGLang-Omni. Higgs Audio v3 TTS is Boson AI's text-to-speech model for conversational voice agents: it generates natural and expres...

Projects

View all projectsOur Sponsors & Partners

Backed by leading companies and institutions advancing AI research.

Voltage Park, NVIDIA, Nebius, Google Cloud, AtlasCloud, a16z, AMD, InnoMatrix, Laude Institute, Hyperbolic, NovitaAI, Verda Cloud, Sky9, Kaggle, MBZUAI, Together, RunPod, Anyscale, HuggingFace